Multilingual Data Collection

Services for AI Training

LanguageMark provides multilingual data collection services for AI and machine learning teams — gathering speech recordings, text corpora, dialogue data, and multimodal datasets across 197+ languages, including all 22 official Indian languages. Every dataset is collected by native-speaking contributors, verified by professional linguists, and delivered in your required format — ready for ASR, NLP, LLM, and conversational AI training.

- 197+ pairs

- 10 Years Experience

- Pilot Projects Available

- All 22 Indian Languages

- Quality over quantity

1,000 well-collected utterances from native speakers outperform 10,000 crowd-sourced recordings of inconsistent quality.

- Domain specificity

A healthcare voice dataset needs medical terminology. A customer service dataset needs colloquial registers. Generic data produces generic models.

- Demographic diversity

Age, gender, regional accent, and dialect diversity must be built into the collection design, not added as an afterthought.

- Consent and compliance

All data collected by LanguageMark is gathered with full informed consent, ownership documentation, and GDPR-compatible data handling protocols.

What Is AI Training

Data Collection — and Why Quality Matters More Than Volume

AI training data collection is the process of gathering raw data — speech recordings, written text, conversational dialogues, images, or video — from human contributors for use in training machine learning models. Unlike web-scraped data, collected data is purpose-built for a specific model requirement — with defined speaker demographics, linguistic variety, domain vocabulary, and recording conditions.

The most common failure in multilingual AI development is not a model architecture problem. It is a data problem. Models trained on insufficient or low-quality multilingual data produce unreliable outputs in non-English languages — regardless of how much compute was used in training.

Our Multilingual

Data Collection Services

Speech & Voice Data Collection

Custom speech dataset creation for ASR, TTS, voice assistant, and spoken dialogue AI training. We recruit native-speaking contributors across defined demographic profiles, manage recording sessions in controlled and natural environments, and deliver verified, transcribed, and formatted audio datasets. Coverage: All 22 Indian languages + regional dialects + code-mixed Hindi-English + 170+ global languages.

- Best for: ASR model training, voice assistant development, IVR systems, speech-to-text for Indian languages, wake word detection, speaker identification.

Text Corpus Collection

Domain-specific text data collection for LLM pre-training, fine-tuning, and instruction-following datasets. We gather text from human contributors in defined domains — legal, medical, financial, conversational, technical — in your target languages, ensuring the corpus reflects actual language use rather than formal written style.

- Best for: LLM pre-training corpora, domain-specific fine-tuning datasets, instruction-following data, chatbot training, search relevance datasets.

Conversational & Dialogue Data Collection

Multi-turn dialogue datasets for chatbot and virtual assistant training — collected from real human interactions in natural conversational registers. We design conversation scenarios, recruit contributors matching your target user demographics, and deliver structured dialogue data with intent labels and turn annotations.

- Best for: Conversational AI, customer service chatbots, virtual assistants, helpdesk automation, multi-turn dialogue models.

Indic Language Specialised Data Collection

Purpose-built data collection for all 22 official Indian languages — including regional dialect variants, script-specific collection, code-switching patterns, and rural/urban register diversity. We work with native-speaking contributors who have been verified for linguistic accuracy by our in-house language team.

This is not generic crowd-sourced data. It is professionally managed, linguistically verified, and built to the quality standards Indian AI models actually need.

- Best for: Sovereign AI initiatives, Indic LLM development, government AI programmes, voice AI for Bharat, Indian language NLP research.

RLHF & Human Preference Data Collection

Human feedback data for Reinforcement Learning from Human Feedback (RLHF) and direct preference optimisation — including response ranking, quality evaluation, and preference labeling by domain-expert contributors in your target language. We manage contributor recruitment, task design, quality calibration, and delivery.

- Best for: LLM fine-tuning and alignment teams, AI safety researchers, companies building domain-specific AI applications that need culturally grounded human feedback.

Multimodal Data Collection

Combined speech, text, and image data collection for multimodal AI training — including image-caption pairs, video-transcript datasets, and audio-visual dialogue data. Collected with multilingual metadata and culturally appropriate content for Indian and international markets.

- Best for: Multimodal LLM training, video AI, image-text models, audio-visual scene understanding, document AI.

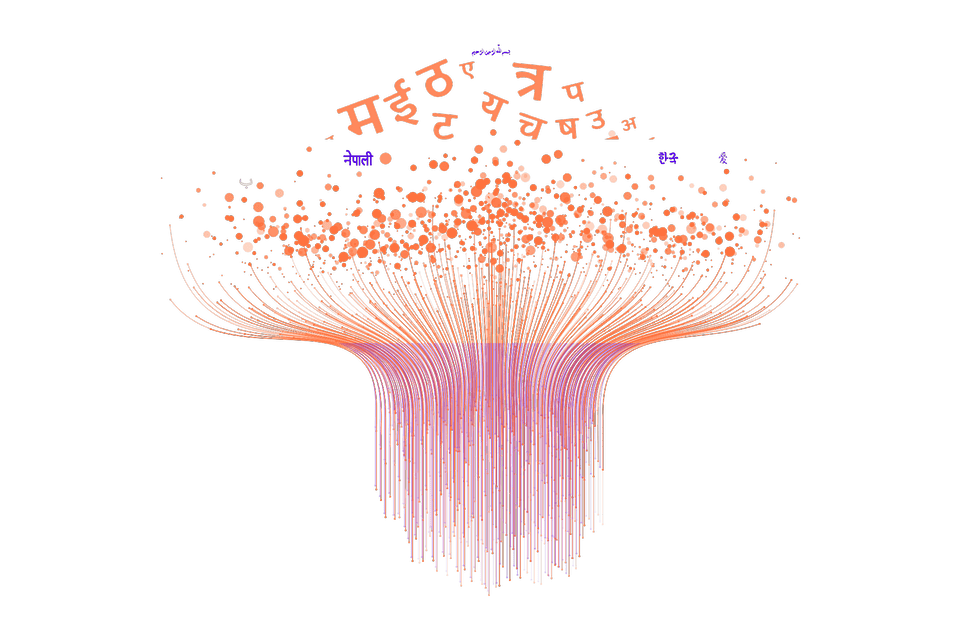

- Scheduled Indian Languages

Hindi · Bengali · Telugu · Marathi · Tamil · Urdu · Gujarati · Kannada · Odia · Malayalam · Punjabi · Assamese · Maithili · Santali · Kashmiri · Nepali · Sindhi · Dogri · Konkani · Manipuri · Bodo · Sanskrit

- Regional Dialects (on request)

Awadhi · Bhojpuri · Rajasthani · Bundeli · Chhattisgarhi · Kumaoni · Garhwali · Tulu · Kodava · Konkani variants

- Code-Mixed Variants

Building AI for India?

Your Data Needs to Reflect India.

India is not one language market. It is 22 official languages, hundreds of dialects, multiple scripts, and the most complex code-switching patterns of any country on earth. A model trained on Hindi alone will fail in Tamil Nadu. A model trained on standard written Hindi will fail in Bhojpuri-speaking districts. A model trained on formal register will fail in casual mobile voice interactions.

LanguageMark has been working in Indian languages professionally since 2014. We understand the linguistic reality of India — not just its official language list. Our data collection programme for Indic languages includes:

- — All 22 constitutionally recognised Indian languages

- — Regional dialect variants including Awadhi, Bhojpuri, Maithili, and others

- — Both formal and colloquial registers per language

- — Script variants where applicable (Devanagari, Tamil script, Telugu script, etc.)

- — Code-mixed Hindi-English in urban and semi-urban registers

- — Age and gender diversity built into every collection design

How We Build Your Dataset

Step 1 —

Requirements scoping

Language pairs, domain, demographic profile, collection environment, format requirements, volume, and quality targets documented before recruitment begins.

Step 2 —

Contributor recruitment and vetting

Native-speaking contributors recruited and screened for language proficiency, domain familiarity, and recording environment quality. Contributors sign consent and data ownership agreements before participation.

Step 3 —

Pilot collection

A small pilot batch collected, reviewed by our linguistic QA team, and validated against your schema. Feedback incorporated before full-scale production begins. No large-scale collection starts without a passed pilot.

Step 4 —

Production collection with QA sampling

Full-scale collection with continuous quality sampling throughout. Audio quality checks, transcription accuracy verification, and linguistic correctness reviewed at regular intervals — not only at final delivery.

Step 5 —

Linguistic review and annotation

Collected data reviewed by in-house linguists for accuracy, naturalness, and schema compliance. Transcriptions verified, annotations applied, and metadata formatted to specification.

Step 6 —

Delivery and documentation

AI Applications

We Build Data For

Large Language Models (LLMs)

Domain-specific text corpora, instruction-following datasets, RLHF preference data, and evaluation sets for LLM training and fine-tuning — in Indian languages and global languages.

Automatic Speech Recognition (ASR)

Read-aloud utterances, spontaneous speech, command-and-control phrases, and conversational recordings in multiple acoustic environments. Designed to train models that work across accents, ages, and noise conditions — not just studio-quality recordings.

Text-to-Speech (TTS)

Expressive, natural-sounding speech recordings from professional and semi-professional voice contributors. Covers multiple speaking styles — neutral, expressive, conversational — with script design included in the workflow.

Conversational AI & Chatbots

Multi-turn dialogue datasets in natural conversational registers — collected from real human interactions in your target domain, language, and demographic profile.

Voice Assistants & Wake Words

Wake word recordings, command utterances, and short-form voice interaction data — with sufficient speaker diversity and acoustic variety to build robust detection models.

Document & Multimodal AI

Frequently Asked Questions (FAQ)

Q: What is AI training data collection?

Q: Why do Indian AI models need specialised data collection?

Q: What Indian languages do you collect data in?

Q: How much data do I need for ASR or NLP model training?

Q: How do you handle data ownership and consent?

Q: Can I start with a small pilot before committing to a larger project?

You May Also Need

Text, audio, image, and video annotation for AI training — in 197+ languages. NER, sentiment, intent, image segmentation, and LLM evaluation data built by domain-specialist linguists.

Overview of all our AI data services — annotation, collection, dataset QA, and RLHF data — in one place.

Professional audio transcription in 197+ languages including all Indian languages. AI transcription review and verification also available.

Tell Us What You're Building.

We'll Tell You What Data You Need.